A regulator walks into your office and asks a simple question: "Where did the data come from that trained the model that just denied this person's insurance claim?" Your data engineering lead opens three different dashboards, cross-references a Confluence page from 2024, and after forty minutes admits they cannot provide a definitive answer. The regulator thanks them politely and opens a formal investigation.

This is not a hypothetical edge case. This is the baseline reality for most organizations running production AI systems in 2026. They have models. They have outputs. They do not have a traceable line from data source to decision.

Data lineage is the unexciting, infrastructure-level work that nobody wants to fund but everyone needs when things go wrong. It is the practice of tracking every dataset from its point of origin through every transformation, merge, cleaning step, and training run until it produces a model output.

Most organizations skipped this step. They were moving fast, assembling training datasets from internal databases, public scrapes, and vendor-supplied packages. The priority was model accuracy, not documentary hygiene. The result is a generation of AI systems built on data with no provenance record, no consent audit, and no contamination checks.

The EU AI Act requires that providers of high-risk systems document their training data with enough detail to allow meaningful oversight. Article 10 is explicit: training datasets must be relevant, representative, and free of errors. You cannot demonstrate any of these properties if you do not know what is in the dataset or where it came from.

Beyond regulation, the practical consequences are severe. Without lineage, you cannot perform a data recall. If you discover that a specific data source contained biased, copyrighted, or personally identifiable information, you need to identify every model that was trained on it, every downstream system that consumed its outputs, and every decision that was influenced by it. Without lineage, this exercise is impossible. You are left with two options: retrain everything from scratch at enormous cost, or accept the liability and hope nobody notices.

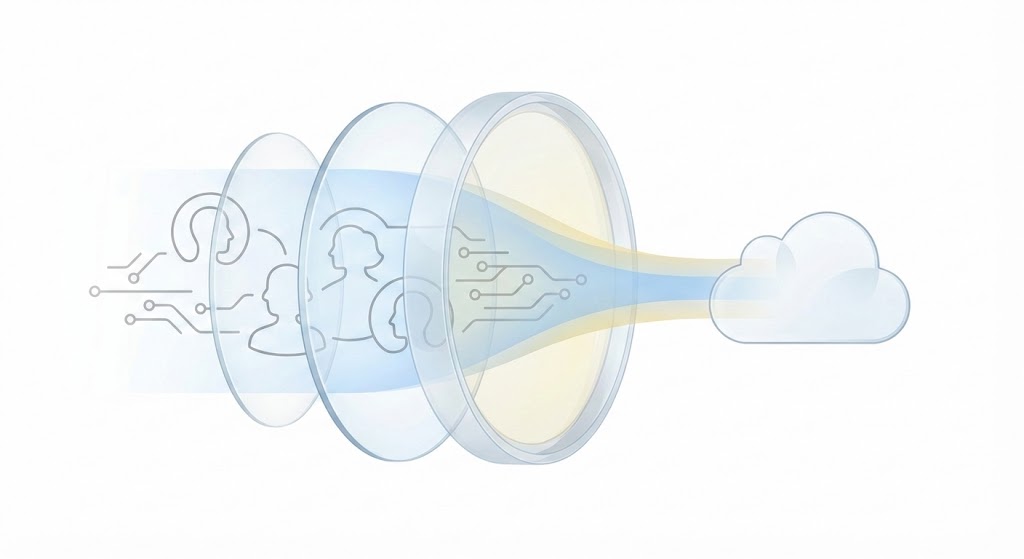

A proper lineage system tracks three layers. The data layer records every source, its acquisition date, its legal basis for use, and every transformation applied to it. The model layer ties each training run to a specific, versioned snapshot of the data. The output layer connects each individual prediction or decision back to the model version and, by extension, the data that produced it.

The tooling exists. Metadata platforms like Apache Atlas, Amundsen, and commercial alternatives from cloud providers can automate much of this tracking. The technical challenge is not insurmountable. The real obstacle is organizational: getting data engineers, ML engineers, and compliance teams to agree on a shared schema and maintain it consistently across every pipeline.

Implementing lineage retroactively is painful. For existing models, you face a choice between reconstructing provenance from incomplete records or accepting that some legacy systems will carry an "unknown origin" flag in your risk register. Both options have costs.

For new systems, adding lineage tracking increases pipeline complexity and introduces latency. Every transformation step now requires a metadata write. Your data engineers will complain about the overhead, and your cloud bill will increase. Storage costs for metadata alone can become significant at scale.

But the cost of not having lineage is binary and catastrophic. When the audit comes, you either have the trail or you do not. There is no partial credit.

Start with your highest-risk model. Trace its training data back to its sources. If you cannot complete that exercise in a single afternoon, you have a lineage problem that needs immediate investment. Do not wait for a regulator to ask the question you cannot answer.